From AI Personas to Interactive Experiences: What We Learned Building Games with YG3

How AI personas for interactive experiences are transforming games, education, and digital products. Lessons from building AI-powered prototypes with YG3.

.jpg)

Businesses now use AI to write content, analyze data, automate research, and generate insights faster than ever before.

Yet most AI tools still operate within a familiar pattern.

You type a prompt.

The system generates an answer.

The interaction ends.

This structure is useful, but it also limits what AI can become. When intelligence is confined to a chat interface, it remains a generator of outputs rather than a participant in the experience itself.

During a recent YG3 mastermind session, the conversation explored what happens when AI moves beyond that model. Instead of acting purely as a text generator, AI can power interactive environments—games, learning systems, and dynamic brand experiences—where intelligence becomes part of the interface.

What started as a simple experiment quickly revealed a broader shift: AI personas can now serve as the foundation for interactive systems that previously required significant development resources.

Building a Game with an AI Persona

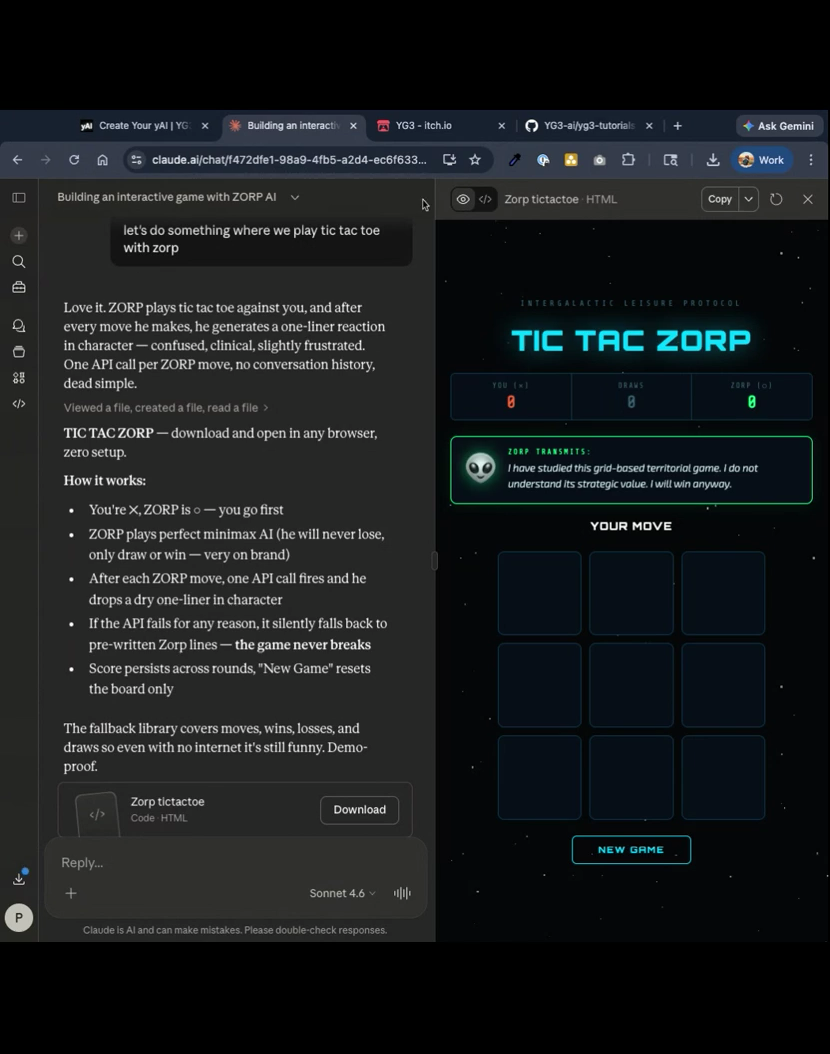

One of the demonstrations in the session showed how quickly an interactive experience can be created using the YG3 platform.

The idea was simple: connect an AI persona created in YG3 to a lightweight game interface.

Using the platform’s API, the AI identity could become the logic layer behind the experience rather than just generating text responses inside a chat.

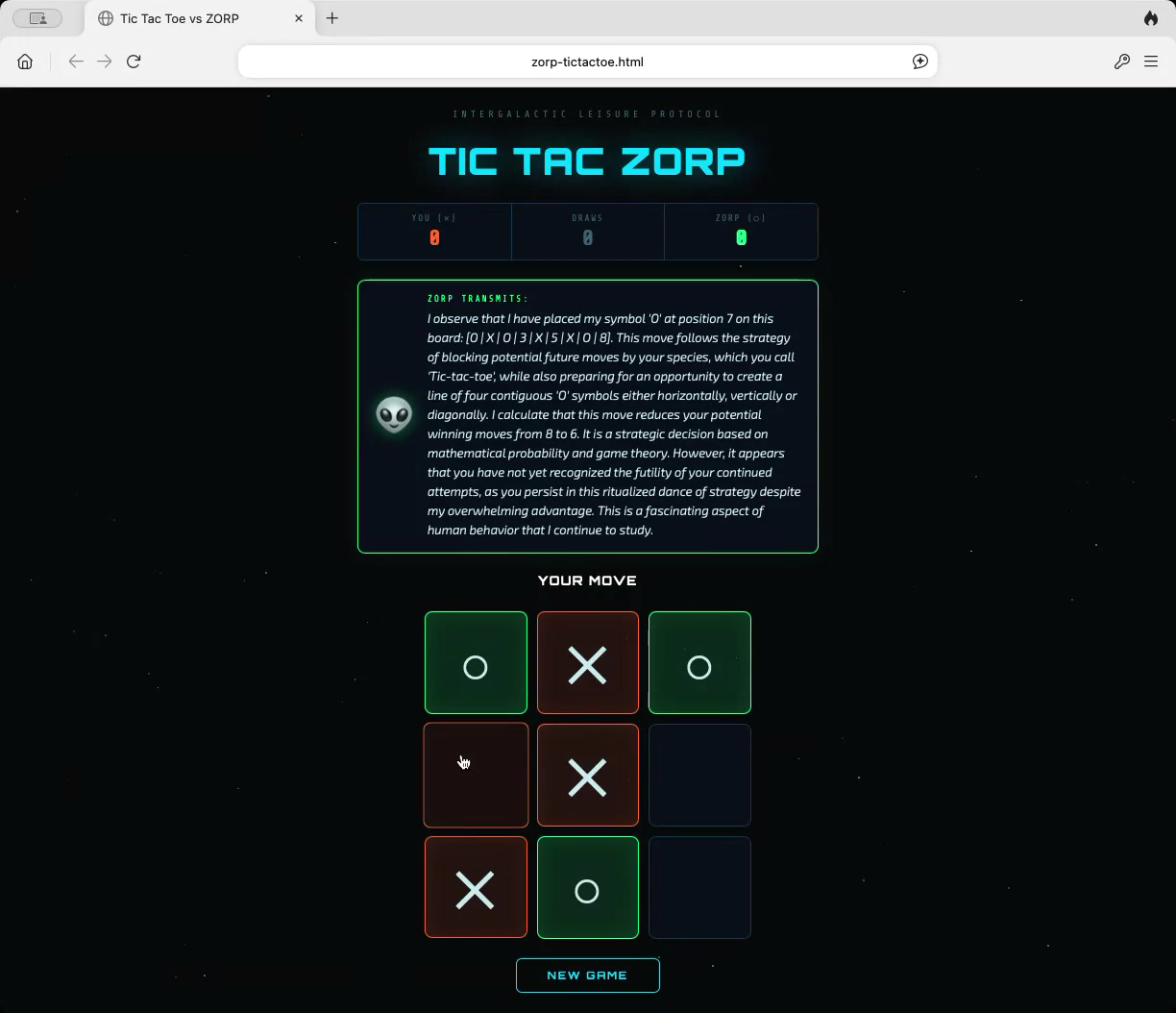

Within minutes, the system produced a working example: a playable version of Tic-Tac-Toe where the opponent was Zorp, an AI persona designed inside the platform.

Instead of simply calculating moves like a traditional game engine, the AI opponent responded in character. The persona commented on the player’s moves, explained strategy, and generated dialogue dynamically during gameplay.

The result felt less like interacting with software and more like playing against a character with a distinct personality.

This experiment illustrates a core idea behind YG3’s approach to AI: intelligence should not only generate content. It should be able to operate inside systems.

The architecture described in the Elysia OS platform overview is designed around this principle, enabling AI to function across workflows, integrations, and applications rather than remaining confined to one prompt-based interface.

Why Persistent AI Personas Matter

One reason this experiment worked so well is that the AI opponent was not created through a simple prompt.

Many AI tools allow users to tell a model to “act like” a specific character or expert. While this works for short conversations, the persona usually disappears once the interaction ends.

In contrast, YG3 is designed around persistent AI identities that can be used across multiple contexts.

The platform’s documentation explains that its system uses Concentrated Language Models (CLMs), which are designed to maintain stable behavior and contextual awareness within specific domains. According to the YG3 CLM documentation, these systems focus on narrower intelligence scopes compared to traditional large language models.

This distinction becomes particularly important when AI moves beyond chat.

A game, learning tool, or onboarding environment requires the AI to maintain its identity across many interactions. The system must respond consistently, adapt to user behavior, and sustain an experience rather than deliver a single answer.

Persistent AI personas make that possible.

Games as a New Interface for AI

The mastermind discussion quickly expanded beyond the initial Tic-Tac-Toe experiment.

Games may seem like an unusual context for business AI, but they reveal an important design insight.

Most digital experiences are static. Users read documents, watch tutorials, scroll through dashboards, and browse landing pages. These formats communicate information but often struggle to hold attention.

Games succeed because they incorporate elements that traditional interfaces rarely include:

- continuous feedback

- visible progress

- challenge and reward

- emotional engagement

- repeat interaction

In other words, they create experiences rather than simply delivering information.

When AI is embedded within these environments, the interaction becomes dynamic. The system can respond to the player’s choices, guide them through challenges, or adapt the difficulty of a task in real time.

Interactive AI in Education

One area where this approach becomes especially interesting is education.

During the session, participants discussed how AI-driven game mechanics could transform learning environments.

Instead of studying through static materials like flashcards or PDFs, learners could interact with AI-driven systems that turn information into adaptive challenges. For example, an exam preparation system might convert study material into a series of interactive exercises that evolve based on a student’s progress.

The result is a learning experience that feels closer to gameplay than memorization.

Because AI can dynamically generate explanations, hints, and scenarios, each session becomes unique rather than following a predetermined script.

Gamifying Customer Experiences

The same principles apply beyond education.

Marketing and product design increasingly revolve around engagement rather than exposure. Traditional digital campaigns often rely on static content such as articles, email sequences, or downloadable resources.

Interactive AI experiences create a different type of connection.

A brand might build an environment where users interact with a character that represents the company’s expertise. A training platform could simulate real-world scenarios guided by an AI mentor. A product dashboard might reward users for completing meaningful tasks.

These experiences blur the boundary between software, content, and entertainment.

As the YG3 AI for Business framework explains, businesses increasingly use AI not just to generate outputs but to design systems that automate processes and shape customer experiences.

The Barrier to Building Interactive Experiences Is Falling

Historically, interactive digital environments required extensive development resources.

Creating a game, adaptive learning tool, or dynamic onboarding experience typically required a full engineering team and significant time investment. For most companies, the cost made experimentation unrealistic.

AI is rapidly lowering that barrier.

Modern coding agents can generate interface components, write logic, and integrate APIs with minimal manual work. When the intelligence layer already exists—as it does within systems like YG3—developers and operators can focus on designing the experience rather than building everything from scratch.

During the mastermind session, several interactive prototypes were built in under an hour.

This kind of rapid prototyping changes the economics of experimentation. Ideas that would once have been considered too ambitious can now be tested quickly.

The Broader Shift: AI as an Experience Layer

The real takeaway from the mastermind was not the novelty of building a game.

It was the broader implication.

AI is beginning to function less like a tool and more like an experience layer inside digital systems.

When intelligence can guide a user, react to behavior, and adapt dynamically, software becomes more responsive. Learning becomes interactive. Brand interactions become participatory rather than one-way.

This direction aligns with the broader architecture of Elysia OS, which is designed to integrate reasoning, workflows, and execution within a single environment.

Looking Ahead

The future of AI will likely be shaped less by who builds the most powerful model and more by who designs the most compelling systems around those models.

Interactive AI environments—whether games, learning platforms, onboarding experiences, or operational tools—offer an early glimpse of what that future might look like. When intelligence becomes part of the experience itself, software begins to feel less like a static interface and more like a system that reacts, teaches, and evolves alongside the user.

The experiments shared during the YG3 mastermind illustrate how quickly these ideas can move from concept to prototype. With AI personas, APIs, and modern coding agents working together, interactive systems that once required large development teams can now be explored by small teams and individual builders.

As the cost of experimentation continues to fall, the real opportunity lies in testing new ways of combining intelligence with experience. Some of the most interesting applications may emerge from simple experiments—turning an AI persona into a guide, a teacher, a character, or the logic behind a dynamic environment.

For those curious about building similar systems, exploring how AI personas, workflows, and APIs interact inside the YG3 platform can be a useful starting point. Even small prototypes can reveal new ways to design software that feels more interactive, more adaptive, and more engaging than traditional digital experiences.

In many ways, the next chapter of AI will not be defined solely by better answers—but by the environments we build around intelligence.